Discover how software defined automation cuts changeover time from weeks to hours—and when it's worth the investment.

Software-Defined Automation: The Future of Flexible Industrial Systems

Production lines run the same sequence — until a customer order shifts, a supplier swaps a material spec, or a controller card finally gives up after fifteen years on the rail. At that point, two operations diverge sharply. One sends engineers into the cabinet to rewrite ladder logic, schedule downtime, and queue an OEM support ticket. The other reconfigures from a laptop in under an hour, tests against a digital twin, and pushes the change live with a rollback ready. Same equipment vintage. Same product mix. Different economics. The difference isn't faster people. It's how their automation thinks. This guide covers what software defined automation actually changes about industrial operations, the three architectural patterns that dominate real deployments, where it doesn't win, and how to assess whether your operation is ready to pilot.

Table of Contents

- Why Hardware-Locked Automation Is Becoming a Competitive Liability

- The Core Difference — Logic Separation From Hardware

- Three Architectural Patterns for Software Defined Automation

- The Five Capabilities That Separate Real Software Defined Systems From Repackaged PLCs

- Where Software Defined Automation Doesn't Win

- What the Americold Deployment Actually Proved

- A Readiness Assessment for Software Defined Automation

Why Hardware-Locked Automation Is Becoming a Competitive Liability

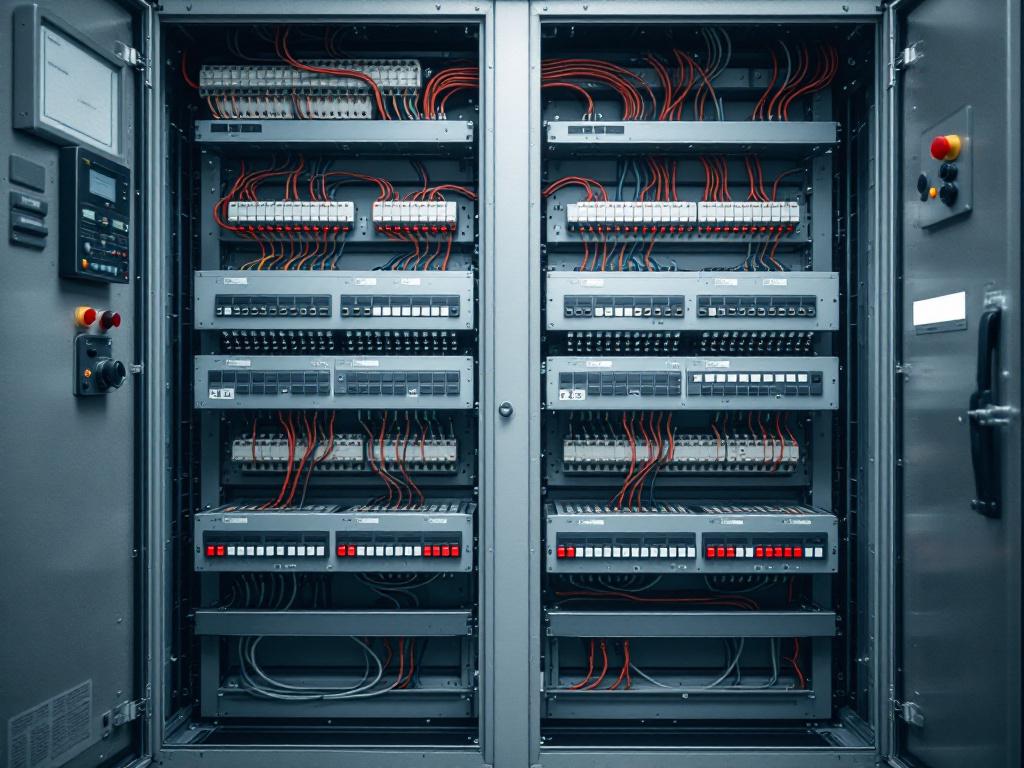

Traditional industrial automation hard-codes control logic into firmware running on programmable logic controllers — Siemens S7, Rockwell ControlLogix, Mitsubishi MELSEC, Omron, and a long tail of regional brands. The controller is the logic. Process changes happen one of two ways: an engineer reprograms the controller in a vendor-specific language (ladder logic, structured text, function block diagrams under IEC 61131-3), or somebody opens the cabinet and rewires physical I/O. Both paths stop the line. Both require specialist labour. Both create artifacts only the original author fully understands.

This architecture made sense when product lifecycles were measured in years and a line ran the same SKU for an entire shift pattern. It makes less sense when changeovers happen daily and supplier specs drift quarterly. According to IoT Analytics, the industry is moving away from "rigid, hardware-centric automation toward modular architectures" precisely because rigidity compounds across product variants, shift patterns, and equipment generations. The cost stack has four layers, and each one gets worse as your product mix gets more dynamic.

Reconfiguration downtime is the most visible cost. Lines stop while engineers reprogram, validate, and commission. A modest sequence change on a packaging line can absorb a full shift; a more involved change pulls multiple shifts across engineering, operations, and quality. The downtime itself is only part of the bill — the bigger number is opportunity cost from delayed product introductions and queued change requests that never get prioritized because the reconfiguration overhead is too high to justify small experiments.

Vendor lock-in is the second cost, and it compounds annually. Once a plant standardizes on one OEM's controller language and toolchain, swapping vendors means retraining staff, rewriting logic libraries, and re-validating safety functions. The switching cost grows with every line added to the same standard. Most plants stay locked in not because the original choice is still optimal, but because the migration math gets uglier each year.

Skill dependency is the structural cost nobody talks about until it bites. Specialist controller programmers — particularly those fluent in older platforms or regional brands — are scarce, expensive, and aging out of the workforce. When the engineer who wrote your original logic retires or leaves, the documentation usually doesn't capture the implicit decisions baked into the code. Tribal knowledge walks out the door, and the next change becomes archaeology before it becomes engineering.

Inability to A/B test is the cost that prevents continuous improvement from compounding. Hard-coded logic discourages experimentation: trying a new sequence means committing to it, and rolling back means another reprogramming cycle. Plants stop trying optimizations because the friction outweighs the expected gain. Process improvement plateaus, then slowly degrades as the gap between actual conditions and original assumptions widens.

Decoupling logic from hardware doesn't eliminate constraints — it makes them visible and manageable.

These four costs are why the World Economic Forum frames industrial automation as approaching an inflection point. Software defined automation is the architectural response — separating control logic from controller hardware so that reconfiguration becomes a software task, not a physical one. The rest of this guide unpacks how that separation actually works, where it pays back, and where traditional systems still hold the line. If you're evaluating broader automation strategy, the architecture question precedes the vendor question by about six months.

The Core Difference — Logic Separation From Hardware

In traditional systems, the controller is the logic. In software defined automation, the controller becomes an execution endpoint and the logic lives in a centralized software layer — often called a virtual PLC or virtualized control runtime. According to packaging research from Bosch's SDM4FZI program, Activity Leader Patrick Wiener describes virtualized control as software that provides "the functions of a conventional, hardware-based control system in a virtualized environment on standard computer systems without having to rely on specialized hardware." [VENDOR SOURCE — Bosch is a manufacturer of automation hardware and has commercial interest in this framing.] The architectural shift sounds incremental on paper. Operationally, it changes which problems are easy and which are hard.

| Aspect | Traditional Automation | Software Defined Automation |

|---|---|---|

| Where control logic lives | Firmware on the controller | Centralized software layer, decoupled from hardware |

| Process change mechanism | Controller reprogramming or rewiring | Software update or parameter change |

| Reconfiguration time | Days to weeks | Minutes to hours |

| Hardware coupling | Tight — sensor swap may force controller change | Abstracted via software drivers |

| Skill profile required | Vendor-specific controller language expertise | General software engineering plus domain knowledge |

| Multi-vendor integration | Proprietary protocols, manual bridging | API-driven interoperability |

| Scaling unit | Per-line, per-site | Per-machine to per-global-portfolio |

The most underrated row in that table is skill profile. Moving control logic into a software layer shifts the talent pool from a few hundred specialists per controller brand to anyone with general software engineering skills plus process domain knowledge. That's a structural change in hiring economics that compounds over five-year horizons. Plants that struggle to find a senior Rockwell programmer can find a competent Python or C# engineer in any major labour market — and that engineer can ramp on the process domain faster than a controls specialist can be trained from scratch.

The second underrated row is scaling unit. Per the World Economic Forum analysis, software defined automation enables operations to scale "from individual machines to entire global plants" — a coordination pattern that is structurally impossible when each line runs its own bespoke controller logic with no shared abstraction layer. The IoT Analytics framing of API-driven interoperability is the same point from a different angle: when integration is a software contract rather than a physical wiring diagram, multi-site coordination becomes a configuration problem rather than a project.

Three Architectural Patterns for Software Defined Automation

Software defined automation is not one architecture. Three patterns dominate real deployments, each making different tradeoffs on latency, uptime, integration scope, and IT maturity. Picking the right one is more consequential than picking the right vendor — the wrong pattern produces a deployment that gets blamed on the technology when the real failure was sequencing.

Centralized Control With Distributed I/O

A single high-availability software runtime — typically on-premises servers or industrial PCs — executes control logic for an entire line or cell. Remote I/O modules handle physical signals over deterministic field buses (PROFINET, EtherCAT, EtherNet/IP). Wins when: the facility is geographically compact, the network is reliable with low-latency round-trip times under 10ms, and uptime requirements allow the centralized runtime to be the focal point of redundancy engineering. Tradeoff: single architectural blast radius. If the runtime fails and redundancy isn't engineered carefully, the entire line stops. Typical use case: discrete manufacturing cells, packaging lines, mid-complexity assembly operations where the whole process fits inside one network segment.

Edge Computing With Decentralized Logic Nodes

Control logic runs on industrial edge devices physically near the equipment they control. Each node is autonomous; an orchestration layer coordinates but does not gate execution. The Bosch Research SDM4FZI program describes edge control as exactly this pattern — control applications transferred from production lines to edge runtimes that operate independently of cloud connectivity. Wins when: latency-critical loops require sub-50ms determinism (pick-and-place, motion control, high-speed inspection), plant topology is distributed across buildings or sites, or mixed-criticality environments demand that some processes keep running when the network drops. Tradeoff: more nodes to version, patch, monitor, and secure. Typical use case: large multi-cell plants, refrigerated logistics — Americold consolidated 20+ heterogeneous control systems with edge-orchestrated software, reported via CrossnoKaye.

Cloud-Orchestrated Multi-Site Coordination

Logic still runs on edge or on-premises runtimes — the cloud does not execute control loops. The cloud layer handles cross-site orchestration, fleet-wide configuration management, analytics, version control, and incident response. Wins when: the operation spans multiple sites and the value lies in coordinated portfolio response. Americold's reported $600,000 in savings during a single heat-wave event came from coordinated response across 20+ facilities, not from cloud-executing the control loops themselves. Tradeoff: cybersecurity surface area expands, cloud connectivity becomes part of the operational dependency map, and data sovereignty enters the design conversation. Typical use case: multi-plant enterprises, cold chain operators, distributed energy assets, and any operator whose ROI thesis depends on cross-site visibility rather than single-line performance.

The right architecture isn't the most sophisticated — it's the one that lets you change process logic without touching hardware.

The mistake most teams make is choosing the most sophisticated pattern by default. Cloud-orchestrated sounds modern; it is also the highest-complexity option and rarely the right starting point. The pragmatic sequence is usually: prove the architectural separation works on one line using the centralized pattern, distribute logic to edge nodes where latency or uptime demands it, then orchestrate across sites once the per-site implementations are stable. Skipping steps creates fragile deployments that get blamed on software defined automation when the real problem is sequencing — and once the orchestration layer is live, it expands the cybersecurity surface in ways that demand mature controls before scale, not after.

The Five Capabilities That Separate Real Software Defined Systems From Repackaged PLCs

Vendors increasingly slap "software defined" onto repackaged PLC platforms. The term is doing a lot of marketing work. Use these five capabilities as a buyer's filter — if a platform fails any of them, the architectural decoupling is incomplete and you'll inherit the same rigidity you were trying to escape, just with a more modern logo on the cabinet.

1. Hardware Abstraction Layer (HAL). The platform must expose sensors, actuators, drives, and I/O through abstracted interfaces, so swapping a temperature sensor or VFD does not require rewriting logic. Without a real HAL, you've just moved firmware into a container. Ask vendors for a written list of supported devices, the path for adding unsupported ones, and concrete examples of customers who have swapped hardware without touching application logic. Vague answers here are disqualifying.

2. Runtime Reconfiguration. Logic changes must deploy live without stopping production, with safe-state fallback if the new logic faults. According to the World Economic Forum, faster commissioning and lower-downtime maintenance are core SDA value drivers — but only if runtime reconfiguration actually works under load. Demand a demonstration on equipment running representative cycle times, not on a marketing rig. Watch what happens when a deployed change throws an exception. If the rollback is manual, the capability isn't real.

3. Cross-Vendor and Cross-Protocol Compatibility. A real SDA platform speaks OPC UA, MQTT, Modbus, EtherNet/IP, PROFINET, and the major OEM protocols natively. According to industrial interconnect supplier Samtec, the Open Process Automation Forum (OPAF) is among the standards bodies working to formalize interoperability requirements. [VENDOR SOURCE — Samtec sells industrial interconnect components.] If a platform only fully supports one OEM's stack with everything else as a "roadmap item," it's a re-skinned proprietary system regardless of how it's marketed.

4. Observability and Versioning. Every logic change must be diffable, attributable, and revertible. This is table stakes in software development and bafflingly absent from much of industrial automation. Demand Git-style version history, role-based change attribution, one-click rollback, and audit logs that survive controller replacement. If your operations team cannot answer "who changed what, when, and why" without phoning the original engineer, the platform isn't software-defined in any meaningful sense.

5. Simulation Before Deployment. The platform must support testing logic changes against a digital twin or simulation environment before pushing to physical equipment. Without this, runtime reconfiguration is reckless rather than agile. Simulation is the de-risking mechanism that makes the rest of SDA defensible to safety leads, operations directors, and auditors. A platform that only validates logic on the physical line is asking you to test in production — which is a technique, not a strategy.

Where Software Defined Automation Doesn't Win

The marketing literature implies SDA is a universal upgrade. It is not. Three hard constraints determine where it wins and where traditional hard-coded systems remain the right answer. Each constraint has technical specifics that survive vendor pitch decks, and each one has produced failed pilots when ignored. Treat this section as the part of the conversation that prevents an expensive lesson.

Network reliability and latency. Cloud-executed control loops cannot tolerate the 100–500ms round-trip latencies typical of internet-routed traffic. A pick-and-place cycle requires sub-50ms determinism; a high-speed packaging line may need sub-10ms. This is why mature SDA deployments do not run loops in the cloud — they run loops on edge runtimes and use the cloud for orchestration, telemetry, and configuration management. The World Economic Forum framing of SDA as resilient-first rather than cloud-first captures this exactly: cloud is one option in the architecture, not the architecture itself. If your plant network has unmanaged jitter, intermittent uplinks, or shared bandwidth with non-industrial traffic, even edge architectures need careful design. Deterministic field buses (PROFINET IRT, EtherCAT) remain the substrate for the loops that actually move metal or product, regardless of what runs above them.

Safety certification. Safety-rated functions — emergency stop, light curtains, two-hand controls, interlocks under IEC 61508, IEC 62061, and ISO 13849 — require certified deterministic behavior and proven redundancy. Software-defined control runtimes must meet the same certification bars as hardware safety controllers, which is a significant engineering and regulatory lift. The pragmatic deployment pattern is hybrid: keep safety functions on certified safety PLCs (or certified safety modules within a software runtime that has gone through formal certification), and move discretionary process logic into the software layer where the agility benefits accrue. Anyone selling you a single-architecture replacement for safety-rated functions without showing certification documentation is selling marketing, not safety. Ask for the certificates by name, the scope of certification, and the residual functions that remain on hardware. If those answers are uncomfortable, the platform isn't ready for your safety-critical processes — a constraint that applies equally to adjacent disciplines like robotics where certified deterministic behavior governs whether a system can be deployed at all.

Software defined automation isn't cloud-first — it's resilient-first with cloud as one option.

Cybersecurity and data sovereignty. Centralizing logic centralizes attack surface. A compromised orchestration layer can theoretically reach every connected runtime — which is a different threat model than a compromised single PLC affecting one line. LNS Research has pushed back on the dominant SDA narrative, arguing the real value is "flexibility, security, and efficiency" rather than the cost savings vendors emphasize — and security in this context is earned through architecture, not assumed by virtue of being software. Mature deployments use network segmentation aligned with the Purdue model, zero-trust between orchestration and edge, encrypted command channels, signed logic deployments with cryptographic verification, and air-gapped redundancy for the most critical processes. Data sovereignty matters too: jurisdiction over plant telemetry can constrain whether cloud orchestration is even legally available for some operations, particularly in regulated industries or cross-border operations. Treat cybersecurity architecture as a prerequisite, not an afterthought — designing it after deployment is roughly ten times more expensive than designing it before.

None of these constraints are reasons to avoid software defined automation. They are reasons to design hybrid deployments where SDA handles the parts of the operation it's good at — discretionary logic, frequent reconfiguration, multi-site coordination — and traditional systems hold the parts where determinism, certified safety, or data sovereignty require it. The teams that get this right treat the boundary deliberately. The teams that get it wrong treat it as a marketing question.

What the Americold Deployment Actually Proved

Most SDA discussion is theoretical. The Americold case is one of the few documented operational deployments with quantified outcomes — a refrigerated logistics operator that consolidated 20+ heterogeneous control systems into a single software-defined platform. Senior Manager of Refrigeration and Engineering Nick Green has spoken publicly about the operational results, reported via CrossnoKaye. Four lessons generalize from this deployment to other operations considering similar consolidations.

The portfolio-response thesis was validated under stress. During a major heat wave, coordinated response across the consolidated platform delivered $600,000 in savings versus what site-by-site management would have produced. The mechanism wasn't smarter algorithms — it was the ability to coordinate at all. Hardware-locked systems at each facility couldn't have communicated this fluidly without months of integration work, and by then the heat wave would have been over. The lesson generalizes: SDA's strongest ROI moments are event-driven, not steady-state. If your operation never faces coordinated stress events, the portfolio thesis is weaker; if it does, the value is concentrated in the events themselves.

Heterogeneity was the prerequisite, not the obstacle. Americold's 20+ control systems came from different vendors, different generations, and different commissioning years. Software defined automation absorbed that heterogeneity through hardware abstraction rather than requiring forklift replacement. This is the realistic adoption pattern for most industrial operators — you start with mixed installed equipment, not a greenfield. Plants waiting for "the right time" to standardize first and modernize second usually never get there. The right move is treating heterogeneity as the entry condition the architecture is designed to handle.

Operational visibility compounded over time. Once logic and telemetry were unified, the operations team gained ongoing visibility into cross-site behavior that had been invisible before. The $600,000 heat-wave event was one realization of the platform's value; smaller compounding gains accrued continuously through better tuning, faster anomaly detection, and reduced manual coordination overhead. The same telemetry foundation also opens the door to applied AI for anomaly detection and predictive tuning — but only once the data pipeline exists, which is itself a byproduct of the SDA consolidation. Treat SDA ROI as a sum of small recurring wins plus occasional large-event payoffs, not a single line item on a business case. Finance teams asking for one big number to justify the program are asking the wrong question.

The transition was incremental, not big-bang. Consolidating 20+ systems happened over multiple project phases, not a single cutover. This is the right pattern. Big-bang SDA migrations fail more often than they succeed because they bundle architectural change with operational change with vendor change — three high-risk transitions running concurrently. The pragmatic sequence is: pick one line or one site, prove the architecture, then expand the perimeter once the operations team has lived with the platform through a full quarterly cycle including a real incident.

A Readiness Assessment for Software Defined Automation

This is not a buying decision yet. It is a readiness decision. Walk through these eight questions with your operations, IT, and safety leads in one room. If you can't answer "yes" or "we have a plan" to at least six, you're not ready to pilot software defined automation — and that's useful information, not a failure. The cost of piloting before you're ready is materially higher than the cost of waiting two quarters and fixing the foundations first. Many operations teams find this assessment more valuable than the vendor evaluation that usually precedes it, because it surfaces the gaps that determine whether any vendor will succeed in your environment.

1. Change frequency justifies the investment. Our production processes change at least quarterly — new SKUs, recipe variants, sequence optimizations, or material substitutions. If the line runs the same SKU for years without modification, the agility argument for software defined automation doesn't apply, and the migration cost won't pay back on flexibility alone. SDA pays back where change is constant.

2. Equipment heterogeneity is real. We run mixed equipment brands across at least one production area. If we're 100% one OEM with no plans to change, we have less to gain from cross-vendor abstraction — though we still gain on logic versioning, runtime reconfiguration, and skills portability. Mostly-homogeneous plants get partial value; heterogeneous plants get the full case.

3. IT and software capability exists or is funded. We have software engineers on staff, a managed service partner under contract, or budget allocated to bring one of those in before the pilot starts. Software defined automation is not set-and-forget — it requires ongoing software lifecycle ownership including patching, version management, observability, and incident response. Operations teams that try to absorb this without dedicated capability usually under-invest until something breaks. Plan the operating model before the platform.

4. Network meets baseline thresholds. Plant network uptime exceeds 99.5%, round-trip latency between control runtimes and edge devices is under 100ms, and we have visibility into jitter and packet loss on the segments that will carry control traffic. Below these thresholds, fix the network before considering SDA. Layering software-defined control on an unreliable network produces failures that look like platform problems but are actually infrastructure problems.

5. Safety boundary is mapped. We've identified which functions are safety-rated under IEC 61508, IEC 62061, and ISO 13849, and we've confirmed those functions will remain on certified controllers in the hybrid model. We have written sign-off from the safety lead on the proposed boundary. Pilots that haven't done this work end up either delaying for safety review at the worst time or — much worse — moving safety functions onto uncertified runtimes and discovering the problem during an audit.

6. Cybersecurity architecture is designed before deployment. Network segmentation, zero-trust between orchestration and edge, signed deployments, encrypted command channels, and incident response procedures are documented — not aspirational. The cybersecurity lead has reviewed the proposed deployment topology and signed off. Retrofitting security after the platform is live is the most expensive way to do this work, and it produces weaker outcomes than designing it in.

7. A pilot scope is isolatable. We can ring-fence one production line or cell for a 2–3 month pilot without disrupting the rest of operations. The pilot line has frequent enough changeovers to exercise the agility argument, low enough safety criticality to absorb learning, and clear enough metrics to evaluate success. Big-bang rollouts are how SDA programs fail visibly. Isolatable pilots are how they succeed quietly and then expand.

8. Post-pilot ownership is assigned. A named owner — operations or IT or a joint role with explicit authority — owns the software layer after the pilot ends. Unowned platforms decay regardless of how well they're built, because nobody patches, nobody monitors, and nobody answers when the orchestration layer alerts. The ownership decision is more predictive of long-term success than the platform choice itself.

If you scored 7–8: pilot in the next two quarters. Pick a non-safety-critical line with frequent changeovers as the candidate, scope the pilot to a single line for 2–3 months, and define success metrics before the platform is selected. If you scored 5–6: address the gaps first — most commonly network reliability, cybersecurity architecture, or post-pilot ownership — then pilot. The work to close those gaps is roughly one to two quarters and pays back independently of whether you eventually deploy SDA. If you scored 3–4: software defined automation is not your next move. Fix the foundational layer (IT capability, network, safety mapping, or ownership model) and revisit the assessment in 12 months. If you scored 0–2: a different operational priority will produce more value sooner. SDA is not currently a fit, and forcing it would consume capacity that should go elsewhere first.